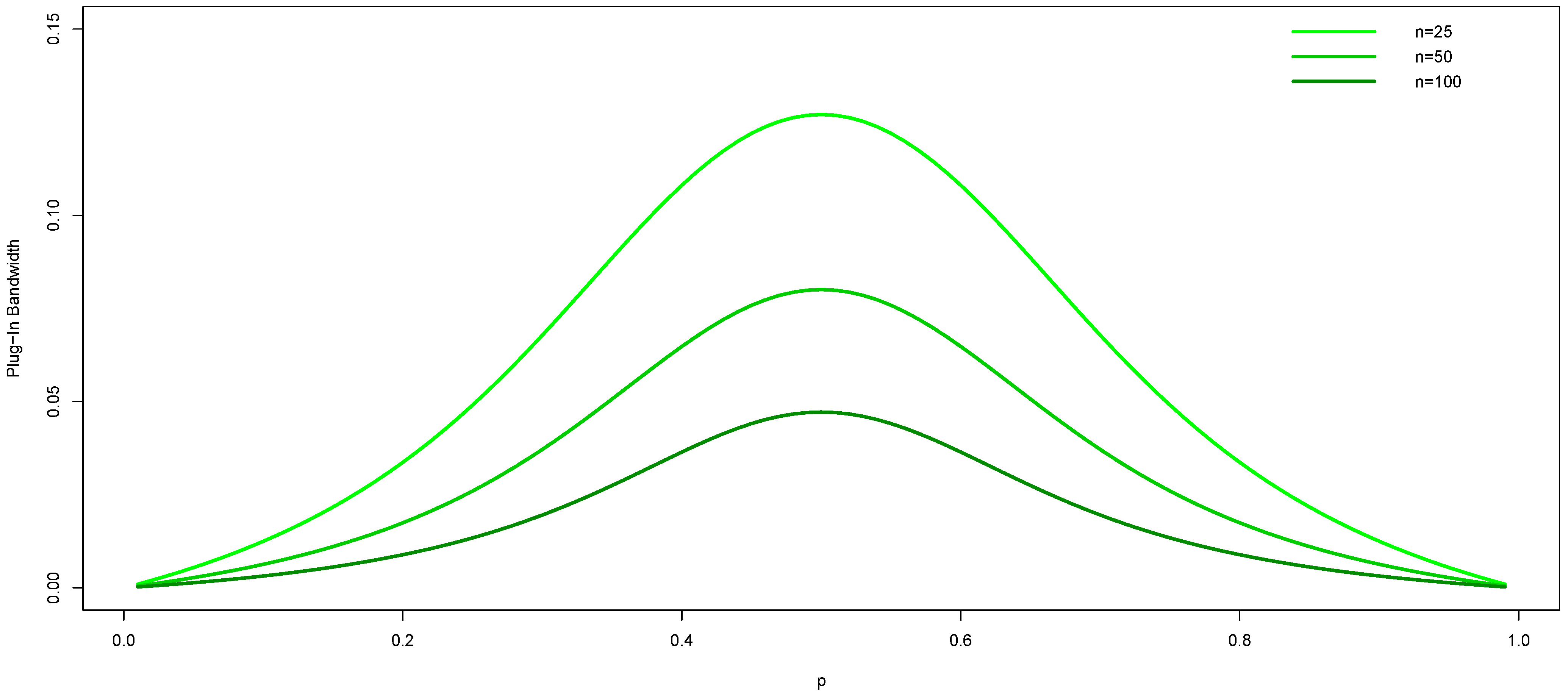

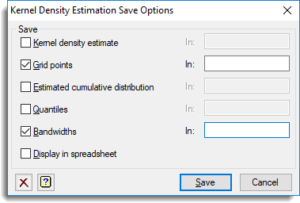

This differs from the results for nonparametric estimation of densities and regression functions for monadic data, which generally have a slower rate of convergence than their corresponding sample mean. One particularly useful metric is the Haversine distance which measures the angular distance between points on a sphere. Specifically, we show that they converge at the same rate as the (unconditional) dyadic sample mean: the square root of the number, N, of nodes. The kernel density estimator can be used with any of the valid distance metrics (see DistanceMetric for a list of available metrics), though the results are properly normalized only for the Euclidean metric. More unusual are the rates of convergence and asymptotic (normal) distributions of our dyadic density estimates. The estimation attempts to infer characteristics of a population, based on a finite data set. We suggest an estimate of their asymptotic variances inspired by a combination of (i) Newey’s (1994) method of variance estimation for kernel estimators in the “monadic” setting and (ii) a variance estimator for the (estimated) density of a simple network first suggested by Holland and Leinhardt (1976). The Kernel Density Estimation is a mathematic process of finding an estimate probability density function of a random variable. In this setting, we show that density functions may be estimated by an application of the kernel estimation method of Rosenblatt (1956) and Parzen (1962). These random variables satisfy a local dependence property: any random variables in the network that share one or two indices may be dependent, while those sharing no indices in common are independent. E.We study nonparametric estimation of density functions for undirected dyadic random variables (i.e., random variables defined for all n ≡ d e f N 2 unordered pairs of agents/nodes in a weighted network of order N). Monographs on Statistics and Applied Probability, London: Chapman and Hall Kernel density estimation is a way to estimate the probability density function (PDF) of a random variable in a non-parametric way. (1986) Density estimation for statistics and data analysis. The efficiency column in the figure displays the efficiency of each of the kernel choices as a percentage of the efficiency of the Epanechnikov kernel. The Epanechnikov kernel is the most efficient in some sense that we won’t go into here. The paper shows that the sequence using the kernel optimal at each fixed sample size is asymptotically more efficient than a sequence generated by changing the bandwidth of a fixed kernel shape, regardless of the kernel shape. Note that seven of the kernels restrict the domain to values | u| ≤ 1. Kernel Density Estimation is a non-parametric method to estimate the density of a population and offers a more accurate way than a histogram. Some commonly used kernels are listed in Figure 1. Where s* = min( s, IQR/1.34) and IQR is the interquartile range of the sample data. Where s is the standard deviation of the sample. If f( x) follows a normal distribution then an optimal estimate for h is This results in a smaller standard deviation the estimate places more weight on the specific data value and less on the neighboring data values.īandwidths that are too small result in a pdf that is too spiky, while bandwidths that are too large result in a pdf that is over-smoothed. You can use a smaller bandwidth value when the sample size is large and the data are densely packed.This results in a larger standard deviation the estimate places more weight on the neighboring data values. This free online software (calculator) performs the Kernel Density Estimation for any data series according to the following Kernels: Gaussian, Epanechnikov. You should use a larger bandwidth value when the sample size is small and the data are sparse.Rules for choosing an optimum value for h are complex, but the following are some simple guidelines: The results are sensitive to the value chosen for h. Let be a random sample from some distribution whose pdf f( x) is not known. Stute obtained in 1982 some valuable results on convergence rates of the estimator, depending on the sample size, kernel and true density.

f(- x) = f( x).Ī kernel density estimation ( KDE) is a non-parametric method for estimating the pdf of a random variable based on a random sample using some kernel K and some smoothing parameter (aka bandwidth) h > 0. A kernel is a probability density function (pdf) f( x) which is symmetric around the y axis, i.e.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed